Strange and Magical world

This IS a reproduction

I've been trying to reproduce the slow nautilus startup times that Federico had been seeing on the CVS version of Gnome that I have checked out. I was reading through code first, but that was really the wrong thing to do. I should have actually timed how long things were taking, and THEN if it was still a problem read through the code.

That's what I did.

On the good news/weird new side, my total nautilus startup times (from the beginning of nautilus main to the expose_event sent to the icon_container) have been much much lower than the times that Federico reported in his blogs. My total startup time avg was ~2secs. His time between gtk_main and the icon_container expose event was 2 seconds! (His time was only including the time between when control was handed over to gtk, and when things were finally painted. Mine included the whole nautilius initialization AND the time for gtk to paint things.)

I don't know WHY things would be so much better. I really wonder if strace was skewing his results like it was doing for the X server. I was also doing this from a WARM cache (his might have been from a cold cache.) I'm not sure.

Highly Variability

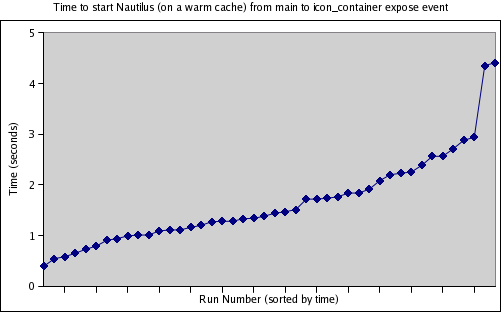

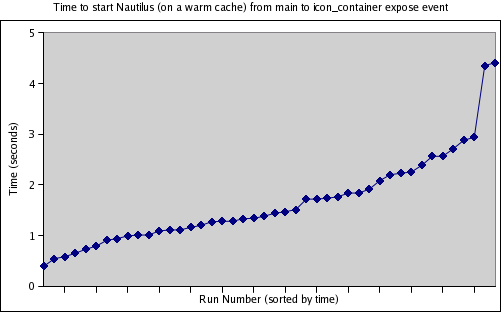

I wrote a few wrapper scripts to automatically launch gnome, time nautilus's startup, and then gather the results. I was curious to see how consistent the startup time was.

Short answer: It wasn't. I had a minimum time of 0.398 sec and a maximum time of 4.411 sec.

I've sorted the 44 runs that I made by time, so here's a picture of it:

When I used to do SPEC CPU benchmarking, the variability from run-to-run would drive us crazy. Some applications would be very fast in one run, and slow in another. Ideally, you could have a run where all of the applications ran at their top speed. It was frustrating.

The compiler writers saw it differently. If there was a variation, that meant it was possible for the application to always run as fast as the fastest time if they could only figure out what was slowing it down in the other times.

The nice thing about the SPEC runs was that each of the programs were independent, so you really should be able to optimize things for each app to run at it's ideal time. Gnome is a little different, since it is a complicated interlocking system. The fast runs of Nautilus MIGHT be because some other startup program has already initialized the components that nautilus needs. Hower, it does say that we have the possiblity for a VERY fast nautilus startup.

Variablility makes it REALLY hard to optimize things, and can often times hide a race, so it really needs to be investigated.

Ideally we could get nautilus to start in 0.398 secs. Why doesn't it do it every time? (This is what I'll be looking at for the next blog entry. Stay tuned... )

Cold cache vs. Warm cache

So far, I've been looking at the warm cache startup time of Nautilus. Why?

Basically I see the warm cache time as the "best we can do". As we eliminate I/O in the cold cache time, we will eventually get closer and closer to the warm cache time.

So while it does make sense to also optimize the cold cache time (as this will be what the first user logging on to a system will experience), starting with the optimization of the warm cache will allow me to understand and optimize the different parts of nautilus, and then see how adding disk I/O slows them down. Also, I/O is scary. ;-) (Ie, Once I/O is added to the mix, things get much less repeatable. Although the "flush the cache" app should help with that.)

I've been trying to reproduce the slow nautilus startup times that Federico had been seeing on the CVS version of Gnome that I have checked out. I was reading through code first, but that was really the wrong thing to do. I should have actually timed how long things were taking, and THEN if it was still a problem read through the code.

That's what I did.

On the good news/weird new side, my total nautilus startup times (from the beginning of nautilus main to the expose_event sent to the icon_container) have been much much lower than the times that Federico reported in his blogs. My total startup time avg was ~2secs. His time between gtk_main and the icon_container expose event was 2 seconds! (His time was only including the time between when control was handed over to gtk, and when things were finally painted. Mine included the whole nautilius initialization AND the time for gtk to paint things.)

I don't know WHY things would be so much better. I really wonder if strace was skewing his results like it was doing for the X server. I was also doing this from a WARM cache (his might have been from a cold cache.) I'm not sure.

Highly Variability

I wrote a few wrapper scripts to automatically launch gnome, time nautilus's startup, and then gather the results. I was curious to see how consistent the startup time was.

Short answer: It wasn't. I had a minimum time of 0.398 sec and a maximum time of 4.411 sec.

I've sorted the 44 runs that I made by time, so here's a picture of it:

When I used to do SPEC CPU benchmarking, the variability from run-to-run would drive us crazy. Some applications would be very fast in one run, and slow in another. Ideally, you could have a run where all of the applications ran at their top speed. It was frustrating.

The compiler writers saw it differently. If there was a variation, that meant it was possible for the application to always run as fast as the fastest time if they could only figure out what was slowing it down in the other times.

The nice thing about the SPEC runs was that each of the programs were independent, so you really should be able to optimize things for each app to run at it's ideal time. Gnome is a little different, since it is a complicated interlocking system. The fast runs of Nautilus MIGHT be because some other startup program has already initialized the components that nautilus needs. Hower, it does say that we have the possiblity for a VERY fast nautilus startup.

Variablility makes it REALLY hard to optimize things, and can often times hide a race, so it really needs to be investigated.

Ideally we could get nautilus to start in 0.398 secs. Why doesn't it do it every time? (This is what I'll be looking at for the next blog entry. Stay tuned... )

Cold cache vs. Warm cache

So far, I've been looking at the warm cache startup time of Nautilus. Why?

Basically I see the warm cache time as the "best we can do". As we eliminate I/O in the cold cache time, we will eventually get closer and closer to the warm cache time.

So while it does make sense to also optimize the cold cache time (as this will be what the first user logging on to a system will experience), starting with the optimization of the warm cache will allow me to understand and optimize the different parts of nautilus, and then see how adding disk I/O slows them down. Also, I/O is scary. ;-) (Ie, Once I/O is added to the mix, things get much less repeatable. Although the "flush the cache" app should help with that.)

8 Comments:

Fantastic work. I can't wait to see the results of your profiling and hopefully enhancements to tools such as sysprof to help us all perform this type of task.

In addition to nautilus startup, I've noticed that getting the properties of a file (right click->properties) is very variable - occasionally instant, but usually taking a few seconds. I've quickly just ran sysprof many times and looked at the 'longest' of these. The root of the trees for me always seem to lead to pthread_mutex_[un]lock. These don't take a long time themselves, but perhaps the time is spent waiting? I wish sysprof would show more details about what's actually being called and especially show a rough count of how many times these things have been called.

By Anonymous, at 11:48 AM

Anonymous, at 11:48 AM

Hello,

Tank again for your work, and continue to write on this blog, it's very interesting.Can you publish your wrapper scripts ? I will like to test on my laptop.

"I've sorted the 44 runs that I made by time"

How look the picture without sorting the result by time ?

By Mariot Chauvin, at 6:22 AM

Mariot Chauvin, at 6:22 AM

mark:

Very interesting about the variable propertires speed.

I think that adding trace statements to the nautilus code is really the way to go to track these types of problems.

Unfortunately, it is hard to know what to trace until you hit a particular problem.

federico:

Ah. That makes sense. From the user's point of view the cold-startup time is ultimately probably the best metric.

(It is kinda annoying that you have to reboot to test this stuff though, but that's the way it goes..)

moksha:

I don't have access to the data/scripts right now, but I'll try to submit them tonight.

By ezolt, at 6:40 AM

ezolt, at 6:40 AM

bulldoglist.com

hardhut.com

pornstar.com

adultbuffet.com

jasara.com

xmoma.com

searchgals.com

soft17.com

hairydivas.com

naughtythoughts.com

By Anonymous, at 10:26 AM

Anonymous, at 10:26 AM

defacialize.com

indianpassion.com

free black lesbian sex video

free lesbian action video

hairypussypost.com

de foto sexo video xxx y

bigassadventure.com

By Anonymous, at 11:15 PM

Anonymous, at 11:15 PM

teenboat

chat single teen

pink world

pic sex teen

bbs teen

moms teaching teen

teenboat

free teen porn

blow job teen

chat rom teen

butt teen

pregnancy teen

free sex teen video

bedding teen

angel teen

By Anonymous, at 8:24 AM

Anonymous, at 8:24 AM

irydivas-com.blogspot.com/>hairydivas.com

By Anonymous, at 12:59 AM

Anonymous, at 12:59 AM

By Anonymous, at 9:59 AM

Anonymous, at 9:59 AM

Post a Comment

<< Home